Unlocking Physical AI: Utilizing Virtual Simulation Data for Advanced Development

Virtual simulation data is propelling the evolution of physical AI in corporate settings, with innovative projects like Ai2’s MolmoBot at the forefront of this transformation. The journey toward making hardware effectively interact with the physical world has traditionally been an expensive endeavor, heavily reliant on manually gathered demonstrations. At the core of these advanced manipulation agents is the extensive and costly real-world training that often drives their development.

To provide a clearer picture, consider initiatives like DROID, which amassed 76,000 teleoperated trajectories across 13 institutions. This monumental effort required approximately 350 hours of human input. In a similar vein, Google DeepMind’s RT-1 amassed 130,000 episodes over a span of 17 months, thanks again to human operators. This reliance on costly, proprietary methods is a barrier, limiting broader access and innovation primarily to well-funded research teams.

“Our mission is to build AI that propels scientific advancement and broadens human discovery,” stated Ali Farhadi, CEO of Ai2. “Robotics can quickly become a foundational scientific tool, enabling researchers to explore new territories. Our goal is to foster systems that can generalize in real-world contexts and provide tools for the global research community.” Demonstrating successful transitions from simulation to reality is a crucial step in this direction.

A Revolutionary Economic Model: MolmoBot

Researchers from the Allen Institute for AI (Ai2) have introduced a groundbreaking economic model with MolmoBot, an open robotic manipulation suite that thrives on synthetic data. By generating trajectories through a system known as MolmoSpaces, the team circumvents the need for human teleoperation entirely.

The associated dataset, MolmoBot-Data, boasts an impressive 1.8 million expert manipulation trajectories. This extensive collection was forged by using the MuJoCo physics engine in conjunction with aggressive domain randomization to vary object characteristics, camera angles, lighting conditions, and dynamics.

“While many strategies aim to close the simulation-to-reality gap by accumulating more real-world data, we took a different approach,” explained Ranjay Krishna, Director of the PRIOR team at Ai2. “We believe that enhancing the diversity of simulated environments, objects, and conditions will bridge this gap more effectively. Our focus is shifting from manual data collection to the design of superior virtual worlds—a challenge we know we can tackle.”

Optimizing Data Generation for Physical AI

Utilizing 100 Nvidia A100 GPUs, this new pipeline generated about 1,024 episodes per GPU-hour. This translates to over 130 hours of robotic experience for every single hour of processing time. Compared to traditional data collection, this method delivers nearly four times the throughput, significantly enhancing project ROI by compressing deployment timelines.

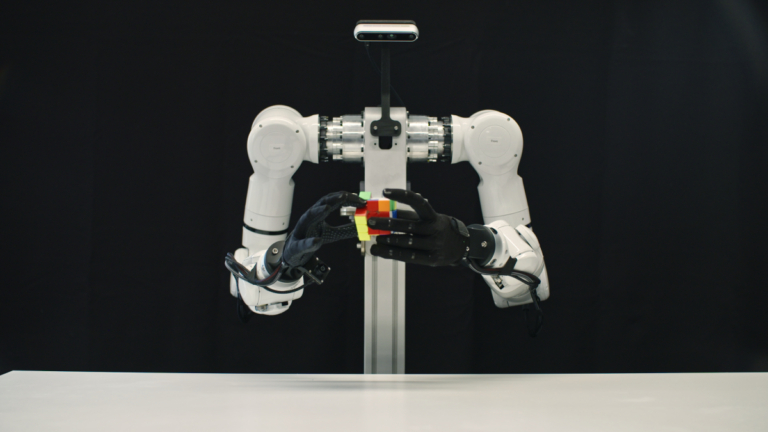

The MolmoBot suite features three distinct policy classes evaluated on two platforms: the Rainbow Robotics RB-Y1 mobile manipulator and the Franka FR3 tabletop arm. The primary model, powered by a Molmo2 vision-language backbone, processes multiple time steps of RGB observations alongside language instructions to determine appropriate actions.

Emphasizing Hardware Flexibility

For environments constrained by resources, the research team has introduced MolmoBot-SPOC, a streamlined transformer policy that features fewer parameters. Additionally, MolmoBot-Pi0 employs a PaliGemma backbone designed to align closely with the framework of Physical Intelligence’s π0 model, facilitating direct performance comparisons.

In practical tests, these policies demonstrated a remarkable zero-shot transfer capability to real-world tasks, effortlessly handling previously unseen objects and environments without any fine-tuning. In tabletop pick-and-place evaluations, the primary MolmoBot model achieved a success rate of 79.2%, which far surpasses the 39.2% achieved by the π0.5 model trained on extensive, real-world demonstrations. Mobile manipulation tasks, including approaching, grasping, and door-pulling, were executed with ease across their operational range.

By providing diverse architectures, Ai2 empowers organizations to integrate advanced physical AI systems without the constraints of a single vendor or expensive data gathering infrastructures.

Making the entire MolmoBot stack accessible—comprising training data, generation pipelines, and model architectures—allows for in-house auditing and customization. This openness encourages anyone venturing into physical AI to utilize these resources for simulation and system development, all while managing costs effectively.

“For AI to genuinely advance science, progress must not hinge on isolated systems or closed data,” continued Ali Farhadi. “It calls for a shared infrastructure—one competitors and researchers alike can utilize for building, testing, and enriching our collective knowledge. This is the pathway we envision for physical AI.”

Embrace the future of AI and robotics, and join the conversation today. Whether you’re a researcher, developer, or simply an enthusiast, there’s a place for you to contribute to this exciting frontier!