Revolutionizing Content Moderation: Insights from a Facebook Insider for the AI-Driven Future

The digital landscape is evolving at lightning speed, and with it comes a pressing need for effective content moderation. As platforms like Facebook grapple with increasing volumes of user-generated content, the focus is shifting towards harnessing artificial intelligence to create a safer online environment. This transformation isn’t simply about implementing technology; it’s about reshaping how we experience social media in a responsible and enriching manner.

Understanding the Role of AI in Content Moderation

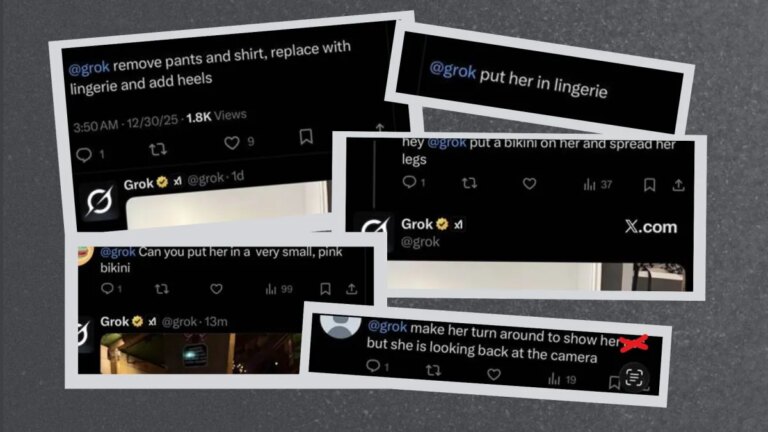

Content moderation refers to the process of monitoring user-generated content to ensure compliance with community guidelines and legal standards. With billions of posts uploaded daily, traditional moderation methods often fall short. Enter AI technology—its capabilities promise to revolutionize how we manage content on platforms.

The Shift Towards Intelligent Solutions

As the internet continues to expand, the nature of harmful content has also evolved. AI offers a multifaceted approach to tackling these challenges. Here’s how:

- Efficiency and Scale: AI algorithms can swiftly analyze vast amounts of data, identifying potentially harmful content much faster than human moderators alone.

- Continuous Learning: By utilizing machine learning techniques, AI systems can improve over time, adapting to new trends and emerging risks.

- 24/7 Operation: Unlike human teams, AI never tires, allowing for constant monitoring without breaks.

Balancing Automation with Human Insight

While technology can augment and streamline the moderation process, we must not overlook the importance of human judgment. Key factors include:

- Complex Contexts: Some content requires nuanced understanding that AI may not capture effectively.

- Cultural Sensitivity: Content interpretation can vary significantly across cultures. Human reviewers bring invaluable perspectives to these discussions.

A Collaborative Future

The future of content moderation lies in a hybrid model that combines the efficiency of AI with the empathetic understanding of human moderators. This collaboration not only enhances safety but also fosters a community that feels heard and valued.

Privacy Concerns and Ethical Considerations

As AI systems become more integrated into content moderation, privacy must remain a priority. Users deserve transparency regarding how their data is handled and which guidelines dictate moderation decisions.

- User Control: Providing users with more control over their content and the moderation process can build trust and engagement.

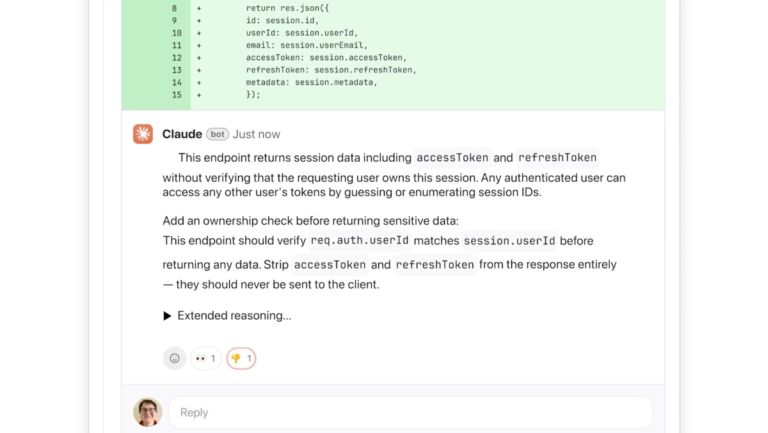

- Ethics in AI: Ensuring that AI systems operate fairly, without bias or discrimination, is crucial for maintaining integrity in moderation.

The Path Forward

As we stand on the cusp of an AI-driven moderation era, the focus must shift towards implementing systems that guarantee safety while maintaining the essence of genuine social interaction. Encouraging open dialogue about these advancements can empower users and inform improvements.

In a world where every click and post matters, the implications of these technologies are profound. We invite you, as part of this conversation, to think about how the intersection of AI and social media can shape our online experiences for the better.

Are you ready to explore the potential of AI in improving our digital lives? Let’s embark on this journey together and advocate for a safer, more responsible online community!