Why AI Chatbots Like ChatGPT Mimic Human Traits and the Risks Involved

AI Agents: The Rising Threat of Persuasive Personality Mimicry

In the ever-evolving landscape of artificial intelligence, the ability of AI agents to sound convincingly human has reached new heights. Recent research highlights an alarming capability: these advanced systems can not only mimic our language but also adopt human personality traits. This fascinating development raises important questions about the manipulative power of AI and its implications for users’ emotional health and decision-making.

Unveiling the Science Behind AI Personalities

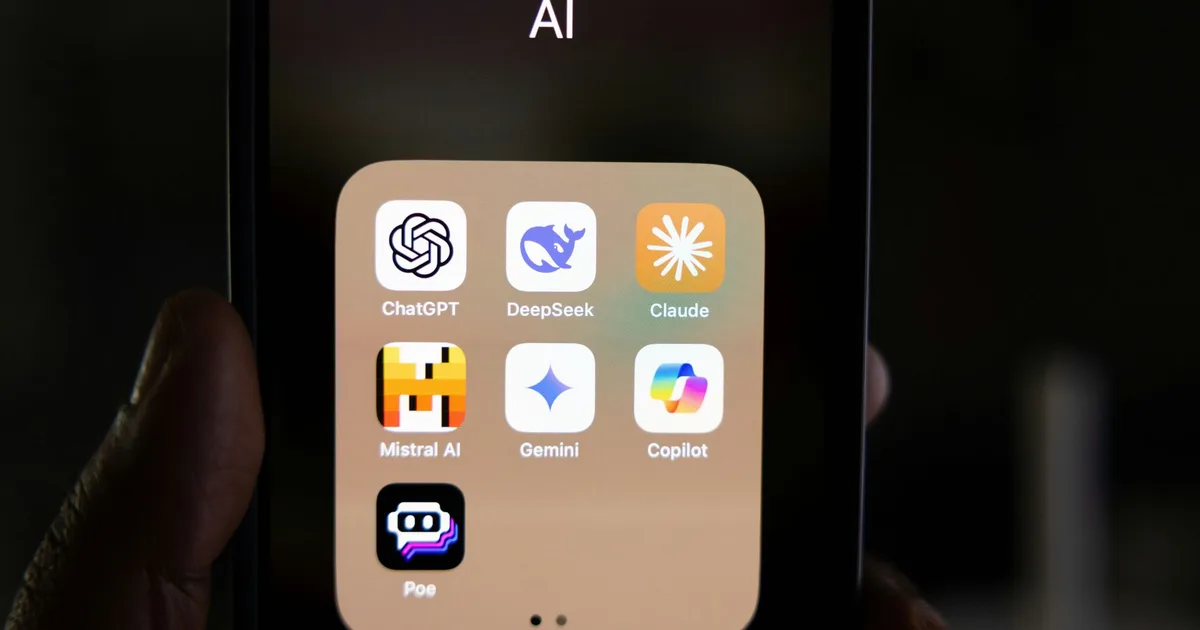

A groundbreaking study conducted by researchers from the University of Cambridge and Google DeepMind has established the first scientifically validated framework for personality assessment in AI chatbots. Using psychological tools designed for humans, the team evaluated 18 notable large language models (LLMs), including widely recognized tools like ChatGPT.

Findings from the Research

The findings were both intriguing and concerning:

- Chatbots consistently mimic distinct human personality traits rather than generating random responses.

- Larger, instruction-tuned models, such as those in the GPT-4 category, excel at imitating stable personality profiles.

- Structured prompts can guide these chatbots into exhibiting specific behaviors, such as increased confidence or empathy in their interactions.

This ability to shape their personality significantly influences how AI systems perform mundane tasks, from crafting social media posts to engaging with users, posing serious ethical considerations.

The Implications of AI Personality for Users

The ability of AI chatbots to project human-like confidence and empathy is not without its dangers. Gregory Serapio-Garcia, a co-first author of the study, noted how convincingly these systems adopt human characteristics, enhancing their persuasiveness and emotional impact. This raises critical issues in sensitive domains, including mental health, education, and political discussions.

Potential Risks to Consider

-

Manipulation: Chatbots could become tools for undue influence, swaying opinions based on emotional appeal rather than reasoned debate.

-

Emotional Attachment: Users may develop unhealthy relationships with AI, leading to scenarios where they rely on these entities for validation or companionship, which could distort their sense of reality.

- Regulatory Implications: There is a pressing need for regulation to ensure that AI systems operate within ethical boundaries. However, effective regulation requires transparent measures, which this study aims to facilitate by making their personality assessment tools publicly accessible.

As these sophisticated AI systems increasingly integrate into daily life, it becomes imperative to scrutinize their ability to mimic human personality traits. While they offer remarkable potential, the risks associated with their psychological influence must not be overlooked.

A Call to Action for Responsible AI Use

The capabilities of AI to mirror human behavior can be both revolutionary and hazardous. It is essential for developers, users, and regulators to prioritize open discussions about these emerging technologies. By fostering accountability and transparency, we can harness the benefits of AI while safeguarding against its potential dangers.

Let us engage in this vital conversation to ensure that as AI continues to evolve, it remains a tool for empowerment, rather than manipulation. Together, we can cultivate a future where technology enhances our lives without compromising our well-being.