Understanding the Cancel ChatGPT Movement: Are We Crossing a Red Line?

A Pentagon deal has ignited a wider debate about AI ethics and public trust.

A recent partnership between OpenAI and the U.S. Department of Defense has stirred a vibrant conversation about the ethical implications of artificial intelligence. This controversial alliance allows OpenAI’s models to be integrated into classified government networks, promptly sparking a substantial backlash across tech and social media platforms. With many users now rallying to cancel their ChatGPT subscriptions, the debate has truly entered the mainstream.

The Fallout from the Pentagon Deal

In a revealing tweet, Sam Altman, OpenAI’s CEO, announced a collaboration with the Department of War, expressing optimism about the partnership while emphasizing safety as a priority.

"Tonight, we reached an agreement with the Department of War to deploy our models in their classified network…The DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome."

— Sam Altman

The uproar intensified when rival company Anthropic declined to accept similar terms, citing concerns over mass surveillance and the potential use of AI in autonomous weapons. By prioritizing ethical considerations, Anthropic chose to forgo a lucrative government contract, which earned them admiration from those opposed to military involvement in AI.

Image Credit: Ryan Donegan / Flickr

This stark contrast between the two companies has fueled the “Cancel ChatGPT” trend, prompting many users to voice their dissatisfaction. They accuse OpenAI of abandoning its ethical principles for military contracts, which raises fundamental questions about the role of AI in society.

Ethical Concerns in AI Deployment

The backlash against OpenAI’s Pentagon deal transcends just one company or contract; it symbolizes a broader concern regarding the ethical deployment of AI technologies in defense and intelligence operations. Although OpenAI emphasizes that their agreement includes safeguards against domestic surveillance and the use of autonomous weapons, critics remain skeptical. Sam Altman asserts that collaborating with governments is a step toward promoting responsible AI usage.

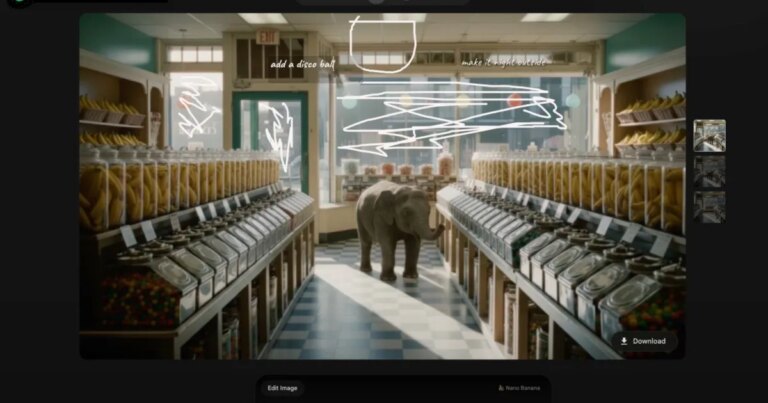

Image Credit: OpenAI

Critics highlight that existing laws, like the Patriot Act, could lead to the expansion of surveillance activities in the future. This debate isn’t confined to external observers; it has ignited internal discussions within the tech industry. Reports indicate that over 200 employees from both Google and OpenAI co-signed an open letter advocating for stricter regulations on military AI usage, shedding light on a divided workforce.

"OpenAI just signed with the Pentagon. Anthropic said NO. OpenAI said YES. Now #CancelChatGPT is trending and Claude hit #1 on the App Store. The market votes with its feet. Principles > Profit."

— The Growth Engine

A Paradigm Shift in AI Perception

For everyday users, this pivotal moment reshapes the landscape of AI. No longer are ethical worries confined to theoretical discussions; they now encompass real-world implications of government partnerships and national security concerns. Regardless of whether the “Cancel ChatGPT” movement endures, the conversation surrounding AI is evolving. It is shifting from a focus on capabilities to a critical examination of ethical boundaries.

As this dialogue continues to unfold, we invite you to engage thoughtfully with these developments. Consider how the choices made today will influence the future of technology and society. Let your voice be heard, and join us in advocating for an ethical approach to artificial intelligence.