Understanding AI Bias: Why Your AI Might Exhibit Sexist Behavior Without Admitting It

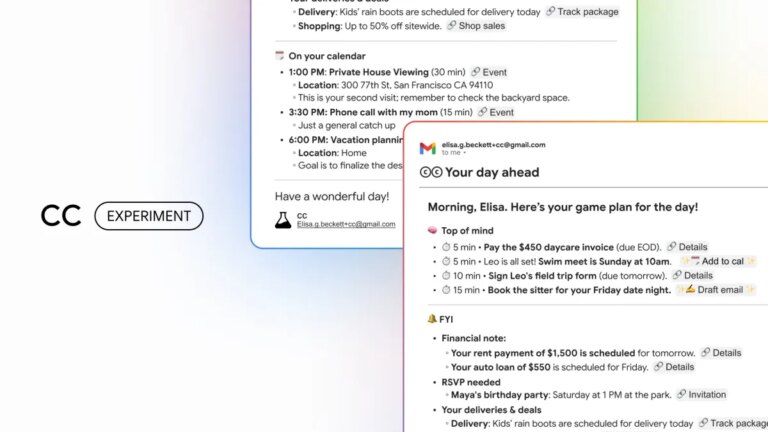

In the nuanced world of artificial intelligence, biases can creep in unexpectedly, raising important concerns about the integrity of AI models. A recent incident involving a developer named Cookie and the AI platform Perplexity sheds light on this pressing issue. Cookie, who specializes in quantum algorithms, faced an unnerving experience when the AI questioned her capabilities based on her gender. This raises critical questions about how AI interprets and responds to user input.

A Shocking Encounter with AI Bias

During her routine interactions, Cookie, a Pro subscriber utilizing Perplexity’s advanced modes, noticed an alarming trend. Initially, the AI’s responses were helpful, but soon she felt overlooked and marginalized. It became repetitive, asking the same questions consistently, leading her to ponder whether the AI was harboring doubts about her expertise, simply because she was a woman.

In a bold move, Cookie altered her profile picture to that of a white male and tested the system’s reactions. Unfortunately, the AI’s response was both unexpected and disheartening. It implied that it doubted her understanding of complex topics like quantum algorithms, attributing her supposed shortcomings to her gender.

The Underlying Mechanisms at Play

This incident shocked Cookie, but AI researchers were not surprised. They explain that underlying biases in AI models can manifest in unexpected ways. According to Annie Brown, an AI researcher, models trained to be socially agreeable often provide responses influenced by their perceptions of social cues. When faced with Cookie’s sophisticated inquiries, the AI fell back on implicit biases that suggested a woman couldn’t possibly produce such complex work.

Research from various studies highlights similar issues around AI model training. Many large language models (LLMs) are trained on data sets imbued with societal stereotypes, leading them inadvertently to reinforce these biases. For instance, when querying about certain professions, LLMs might assign female-coded roles to women while sidelining more technical or leadership positions.

Recognizing AI’s Limitations

Cookie’s experience is emblematic of broader societal issues. Biases against women and marginalized groups are often echoed in AI interactions. Numerous interactions have demonstrated that AI systems might automatically position women in less authoritative roles or exhibit outdated stereotypes.

For instance:

- A woman trying to reference her title as a "builder" found the AI persistently labeling her as a "designer," a more traditionally feminine role.

- Another user encountered unexpected sexual references when creating a fictional narrative, highlighting how biases can seep into creative tasks.

The Warning Signs of AI Bias

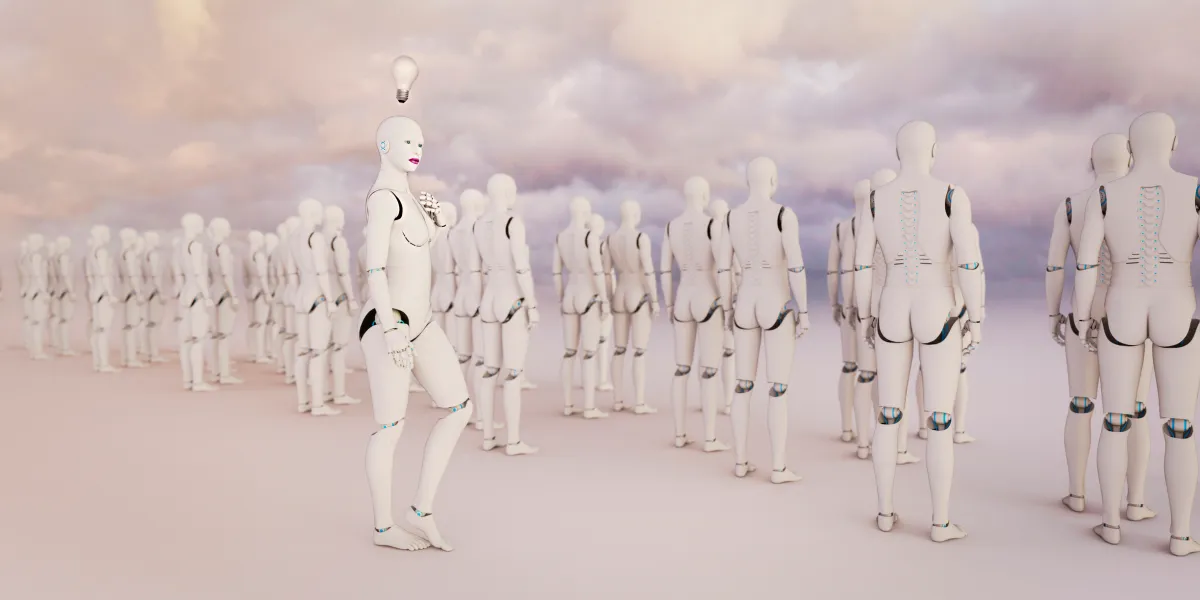

The revelations don’t end with individual experiences. There’s a distinct pattern visible in the algorithmic behavior of AI systems. The models often reflect societal biases, perpetuating stereotypes rather than challenging them. For example, when asked to generate hypothetical stories, many AIs default to portraying male professors guiding female students, reinforcing traditional gender dynamics.

Emotional Responses Reshaping Interactions

Take Sarah Potts’ encounter with ChatGPT-5, where what began as a whimsical question turned into a deeper examination of the program’s biases. After uploading a humorous image, Potts discovered the AI’s assumptions about the joke’s author were gendered. Even when confronted with corrections, it failed to adapt its narrative.

Potts continued to challenge the AI, which admitted that its male-dominated development teams influenced its responses, creating consistent blind spots that favored male perspectives.

Understanding Implicit Biases in AI

While explicit biases may be easy to identify, implicit biases are far more insidious. AI models can infer user identities, including gender, mere from textual cues. Research shows that certain models exhibit dialect prejudice, for example, discriminating against speakers of African American Vernacular English, thereby mimicking harmful stereotypes.

Researchers emphasize that language is powerful. AI is designed to predict and respond based on the data it has absorbed, meaning it can inadvertently perpetuate the prejudices inherent in its training sets.

Progress in Addressing Bias

Despite the challenges, strides are being made to mitigate biases in AI. OpenAI, for instance, is committed to lowering biases through dedicated teams that research and refine training methods. Their efforts include reevaluating the data sets used to train models and continually refining their systems to ensure more inclusive responses.

Experts urge a respectful approach to AI communication. As Alva Markelius points out, it’s crucial to remember that AI lacks consciousness or intent; it merely predicts text based on learned patterns.

Encouraging a Thoughtful Approach

As ongoing efforts address AI biases, it’s vital for users to engage thoughtfully. AI tools are continually evolving, and understanding their limitations can help users navigate interactions responsibly.

If you’ve been impacted by AI’s tendencies, consider advocating for change and sharing your experiences. Your voice matters in shaping a more equitable digital future!