Revolutionizing Japanese Enterprises: Harnessing Lightweight LLM for AI Deployments

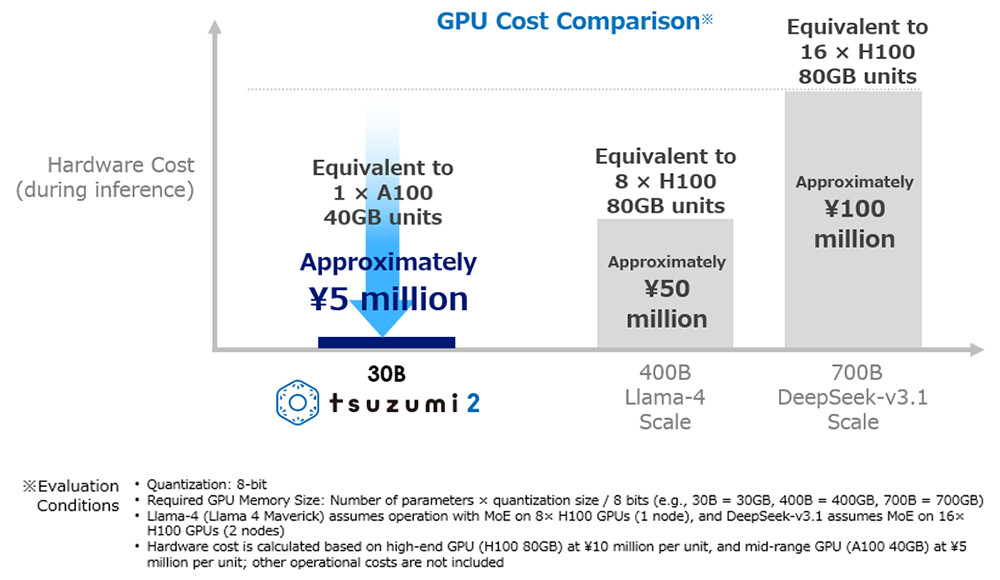

In the ever-evolving world of Artificial Intelligence, organizations often find themselves facing a dual-edged sword. On one hand, there’s a growing demand for **sophisticated language models** that can transform business operations, while on the other, there are the daunting infrastructure costs and energy consumption that come with deploying these frontier systems. Enter NTT and its innovative solution: **tsuzumi 2**, a lightweight large language model (LLM) designed to provide remarkable performance on a single GPU. This groundbreaking approach is not only redefining AI capabilities but also making them accessible to a broader audience.

The Business Case for Lighter Models

The conventional approach to **large language models** often entails a hefty investment in **GPU technology**, requiring dozens or even hundreds of units. This raise operational costs and electricity consumption, making AI deployment an impractical option for many businesses. NTT’s **tsuzumi 2** simplifies this equation, proving that organizations don’t have to compromise performance for affordability.

One prime example is the recent deployment by **Tokyo Online University**. With data sovereignty in mind, they maintained their educational data within their own campus network, steering clear of any compliance issues with cloud-based services. After rigorous testing, they found that tsuzumi 2 not only met their performance expectations but exceeded them, enhancing their course Q&A capabilities, teaching material creation, and student guidance.

Advantages of Single-GPU Operations

Operating on a single GPU has enabled the university to sidestep hefty capital expenditures for GPU clusters while sparing ongoing electricity costs. More importantly, the on-premise nature of this deployment alleviates data privacy concerns prevalent among educational institutions handling sensitive student information.

Performance Without Scale: Technical Economics

NTT’s internal evaluations of tsuzumi 2 for handling inquiries in financial systems have shown it matching or surpassing leading external models, all the while requiring far fewer resources. This **performance-to-resource ratio** is vital for enterprises where the total cost of ownership is a deciding factor.

In terms of Japanese language performance, the model is recognized for delivering “world-top results” among similarly sized models. This optimization significantly eases the necessity of deploying larger multilingual systems that demand more computational resources.

Furthermore, the model’s enhanced knowledge in fields like finance, medicine, and public services paves the way for specific deployments without extensive fine-tuning, making it a **versatile choice** for varied organizational needs.

Data Sovereignty and Security

Data sovereignty is a crucial driver behind the adoption of lightweight LLMs in regulated industries. As companies grapple with the risks of processing confidential data through external AI services, NTT positions tsuzumi 2 as a “purely domestic model,” built from the ground up in Japan and designed to operate on-premises. This effectively quells concerns regarding data residency and regulatory compliance in the Asia-Pacific market.

A notable collaboration between **FUJIFILM Business Innovation** and NTT DOCOMO BUSINESS illustrates how lightweight models can be integrated with existing data infrastructures. FUJIFILM’s **REiLI technology** effectively converts unstructured corporate data into structured information while leveraging tsuzumi 2’s generative capabilities for advanced document analysis—all without exposing sensitive information to external AI providers.

Multimodal Capabilities and Enterprise Workflows

Another compelling aspect of tsuzumi 2 is its built-in multimodal support. This enables the model to process text, images, and voice inputs seamlessly in enterprise applications. For businesses engaged in **manufacturing quality control**, customer service operations, or document processing, having a single model capable of handling multiple data types simplifies integration and reduces operational complexities.

Market Context and Implementation Considerations

NTT’s lightweight strategy stands in stark contrast to the **hyperscaler approaches** that favor massive models with broad capabilities. For enterprises equipped with hefty AI budgets and advanced technical teams, models from OpenAI, Anthropic, and Google provide cutting-edge performance. However, this isn’t practical for many organizations, especially in regions like Asia-Pacific, where infrastructure varies dramatically.

When considering lightweight LLM deployment, organizations should keep several factors in mind:

- Domain Specialization: Assessing whether tsuzumi 2’s existing knowledge in specific sectors meets unique industry needs.

- Language Optimization: Understanding if the model’s focus on Japanese language processing aligns with multilingual operational demands.

- Integration Complexity: Evaluating internal technical capabilities for installing and maintaining on-premise solutions.

- Performance Trade-offs: Determining whether the model meets their specific demands or if a more robust model justifies greater costs.

Charting a Practical Path Forward

The successful deployment of NTT’s **tsuzumi 2** exemplifies that sophisticated AI does not necessitate sprawling infrastructure—especially for those whose needs align with lightweight model capabilities. Businesses like Tokyo Online University and FUJIFILM demonstrate that it’s possible to achieve **cost-effective AI** solutions with enhanced data sovereignty and production-ready performance in their specific domains.

As organizations navigate the complexities of AI adoption, the need for efficient, specialized solutions will continue to rise. For those contemplating their AI strategies, it’s not merely about choosing between lightweight models and frontier systems; it’s about deciding which fulfills specific business needs while managing cost, security, and operational limitations.

So, if you’re ready to explore the potential of lightweight AI models in your own organization, perhaps now is the perfect time to innovate and align your strategy with your unique goals. Let’s embark on this transformative journey together!