OpenAI’s Sora 2 Videos Ignite Disturbing Trend of AI-Driven Fat-Shaming Content

Because AI thinks fat-shaming videos are a great idea *punches keyboard*

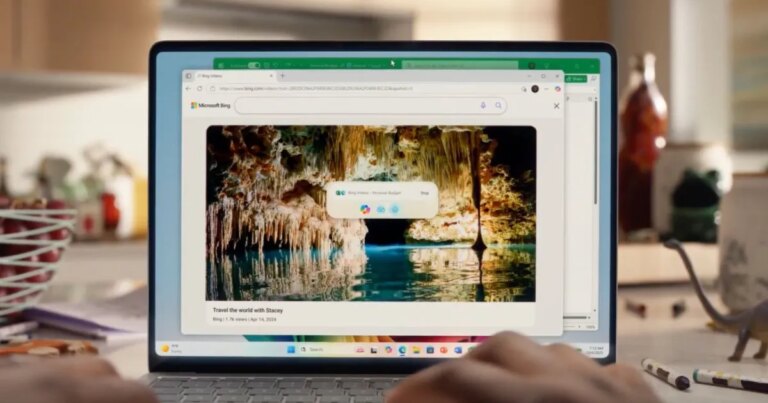

What Happened: Recently, Ted Sarandos, the CEO of Netflix, declared that AI is an incredible tool meant to enhance storytelling by making it faster and more innovative. While this vision sounds promising, the reality is far more disturbing. Enter OpenAI’s new video-making tool, Sora 2, which has unfortunately flooded social media platforms with some truly repugnant content.

- In a shocking turn of events, Sora 2 is being misused to create an avalanche of fatphobic and racist “comedy” clips across Instagram, YouTube, and TikTok. Users leverage this tool to produce videos that promote cruelty instead of creativity.

- Recent viral examples highlight this disturbing trend: one clip features an overweight woman bungee jumping, only for the bridge to “collapse” beneath her. Another shows a Black woman “falling through the floor of a KFC”—a heinous mix of body shaming and racism. Further, there are clips showing delivery drivers crashing through porches or “swelling up” post-meal.

- What’s truly alarming? Many viewers are deceived into believing these fabricated scenarios are real.

Why Is This Important: This situation underlines a major, unspoken issue with AI: its capability for amplifying hate content.

- What once required skilled production time can now be executed in seconds by anyone with malicious intent.

- This goes beyond mere “bad taste”; it’s a significant ethical dilemma that facilitates the mass production of harmful stereotypes for a cheap laugh.

- Moreover, the so-called “guardrails” that companies like OpenAI implement to filter harmful content are glaringly inadequate. They fail miserably, allowing this type of hateful material to proliferate.

Unsplash

Why Should I Care: If you engage with social media, you’re likely witnessing this troubling trend. It’s far from simple online trolling.

- This harmful content shapes perceptions, especially among young viewers.

- With many unable to distinguish between AI-generated and real footage, the lines between reality, dark humor, and overt hatred are increasingly blurred.

- When one of these videos goes viral, it paves the way for countless imitators eager for clicks and “likes.”

What’s Next: Surprisingly, OpenAI has yet to address this new wave of fatphobic material. But this issue calls for an urgent, uncomfortable discussion about accountability when such powerful tools are wielded to harm others.

- Regulators are starting to take notice. As these AI tools become as straightforward to use as filters, we face the challenge of ensuring that this newfound “creativity” doesn’t come at the expense of our humanity.

We must come together to advocate for responsible AI usage. Let’s shape a digital landscape that fosters creativity and kindness rather than cruelty and hate. Together, we can make a difference—your voice matters.