Microsoft Uncovers $4 Billion in AI-Driven Scam Prevention: A Surge in Fraud Awareness

AI-powered scams are becoming increasingly sophisticated, leveraging cutting-edge technology to target unsuspecting victims. Recent insights from Microsoft’s Cyber Signals report illustrate a concerning trend that demands our attention. Over the past year, Microsoft has thwarted a staggering $4 billion in fraudulent activities, blocking nearly 1.6 million bot sign-up attempts every hour. This alarming data highlights the rapidly evolving landscape of online deceit.

The latest edition of Microsoft’s report—titled “AI-powered deception: Emerging fraud threats and countermeasures”—reveals how advancements in artificial intelligence have lowered the barrier for entry for cybercriminals. What once took days to orchestrate can now be executed in mere minutes, allowing even the least experienced fraudsters to generate sophisticated scams with little effort. This democratization of fraudulent capabilities is not just a technical concern; it profoundly impacts consumers and businesses globally.

The Evolution of AI-Enhanced Cyber Scams

The report shines a light on how AI tools can effortlessly scrape the web for company information, enabling cybercriminals to create detailed profiles for targeted social engineering attacks.

With these tools, they can craft fake AI-generated reviews and storefronts that appear genuine, complete with fictitious business histories and customer testimonials.

Kelly Bissell, Corporate Vice President of Anti-Fraud and Product Abuse at Microsoft Security, emphasizes the rising stakes: “Cybercrime is a trillion-dollar problem, and it has been increasing year after year.”

She believes we have a unique opportunity to harness AI for protective measures, pointing out, “Now we have AI that can make a difference at scale and help us build security and fraud protections into our products much faster.”

E-Commerce and Employment Scams Leading the Charge

Two particularly troubling areas where AI is enhancing fraud are e-commerce and job recruitment scams. In the e-commerce landscape, fraudulent websites can be set up within moments, often mimicking legitimate businesses. They employ AI to generate compelling product descriptions, images, and even customer reviews to mislead consumers into thinking they’re engaging with reputable merchants.

Adding to the deception, AI-driven customer service chatbots can interact with potential victims, offering scripted excuses to delay chargebacks and manipulate complaints.

Job seekers are equally vulnerable, as generative AI simplifies the creation of fake job listings across various platforms. Scammers utilize forged credentials, auto-generate job descriptions, and execute phishing campaigns aimed at job hunters.

AI-powered interviews and automated email communications increase the believability of these scams, making them more challenging to detect. “Fraudsters often ask for personal information, like resumes or even bank account details, under the guise of verifying the applicant’s credentials,” the report warns.

Watch for Red Flags

Be wary of:

- Unsolicited job offers

- Requests for payments

- Communication through informal platforms like text messages or WhatsApp

Microsoft’s Comprehensive Countermeasures

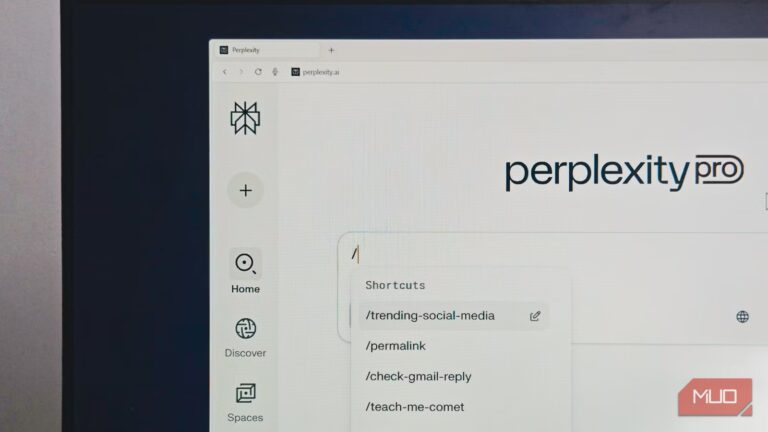

Microsoft is taking a proactive stance against these emerging threats with a multi-layered approach. Microsoft Defender for Cloud secures Azure resources, while its Edge browser leverages deep learning technology to protect users from fraudulent sites. The report underscores that Edge features typo protection and safeguards against domain impersonation.

Furthermore, the company has enhanced Windows Quick Assist to alert users about potential tech support scams before they grant access to unverified individuals. Currently, Microsoft averts an average of 4,415 suspicious Quick Assist connection attempts each day.

As part of its Secure Future Initiative (SFI), Microsoft has introduced a new fraud prevention policy. Beginning in January 2025, product teams are required to conduct fraud assessments and incorporate fraud controls directly into their design processes, thus ensuring products are “fraud-resistant by design.”

Staying Vigilant

As AI-driven scams continue to evolve, consumer awareness is crucial. Microsoft advises users to:

- Stay cautious of urgency tactics

- Verify website legitimacy before making purchases

- Avoid sharing personal or financial information with unverified sources

For enterprises, implementing multi-factor authentication and deploying deepfake-detection algorithms can significantly mitigate risks.

Call to Action

We all have a role to play in safeguarding our digital experiences. By staying informed and vigilant, we can collectively build a more secure online environment. So, let’s commit to sharing information, educating ourselves, and empowering others to recognize and combat these growing threats.