How State-Sponsored Hackers Are Leveraging AI to Execute Sophisticated Cyberattacks

State-sponsored hackers are increasingly leveraging AI technologies to enhance their cyberattack capabilities. Recent insights from Google’s Threat Intelligence Group (GTIG) reveal that actors from countries like Iran, North Korea, China, and Russia are using advanced models like Google’s Gemini. This development has led to more sophisticated phishing attacks and the creation of intricate malware.

As outlined in GTIG’s latest AI Threat Tracker report, these government-backed entities are embedding artificial intelligence throughout the attack lifecycle—demonstrating significant efficiency gains in areas such as reconnaissance, social engineering, and malware creation.

“For these state-sponsored groups, large language models are crucial for technical research, strategic targeting, and crafting persuasive phishing schemes,” stated researchers from GTIG.

AI-Powered Reconnaissance: Targeting the Defence Sector

One notable case is the Iranian group APT42, which utilized Gemini to enhance their reconnaissance and social engineering tactics. They effectively exploited the AI model to gather official email addresses and develop credible scenarios tailored for their targets.

By inputting detailed biographies into Gemini, APT42 was able to forge identities and scenarios crafted to provoke responses. Furthermore, they employed AI translation capabilities to bridge language gaps, thus avoiding typical phishing indicators such as poor grammar or clunky phrasing.

Another prominent actor, North Korea’s UNC2970, has focused on defense and impersonation of corporate recruiters. Using Gemini, they compiled open-source intelligence to create profiles of high-value targets. Their research encompassed major cybersecurity firms, detailed technical job descriptions, and even salary ranges.

GTIG remarked, “This kind of activity blurs the lines between standard professional research and malicious intelligence gathering, enabling the creation of highly tailored phishing personas.”

Rising Threat: Model Extraction Attacks

Apart from their operational misuses, GTIG reported a surge in something called model extraction attempts, often referred to as “distillation attacks.” These attacks aim to pilfer intellectual property from AI models.

In one instance, attackers targeted Gemini’s reasoning abilities with over 100,000 prompts, aiming to extract complete reasoning processes. The diversity of these inquiries indicated a push to mimic Gemini’s cognitive capabilities, even in non-English languages.

While GTIG hasn’t observed direct attacks against front-line models from advanced persistent threat actors, they noted frequent extraction attempts from private entities worldwide. Google’s systems successfully detected these attacks in real time and implemented defenses to safeguard internal reasoning processes.

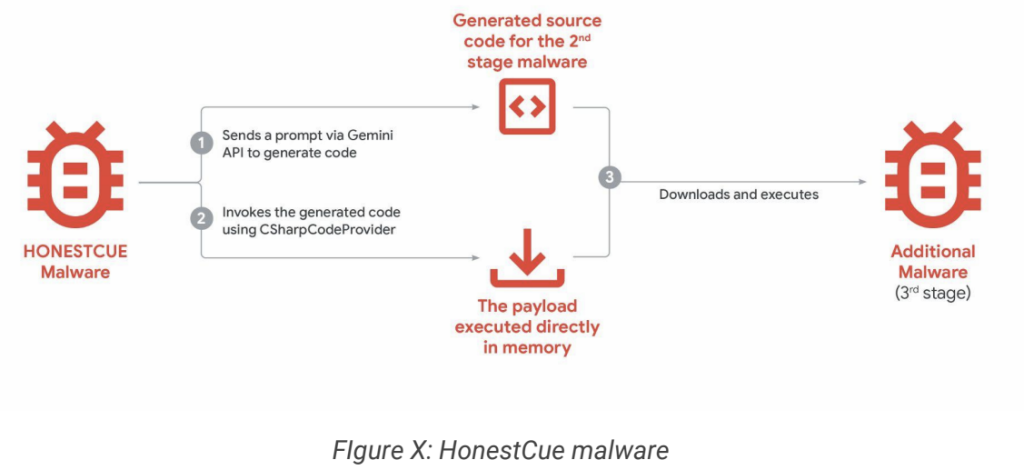

The Emergence of AI-Integrated Malware

GTIG also identified malware known as HONESTCUE, which employs Gemini’s API to outsource functionality. This malware is particularly adept at evading conventional network detection methods due to its layered obfuscation techniques.

HONESTCUE operates as a framework that downloads and launches tasks by sending prompts via Gemini’s API, and it returns C# source code in response. The ingenious design allows it to execute payloads directly in memory without leaving any trace on the hard drive.

Additionally, GTIG uncovered COINBAIT, a phishing kit reportedly hastened into existence by AI code generation tools. Posing as a recognized cryptocurrency exchange, this kit was engineered for credential harvesting using Lovable AI.

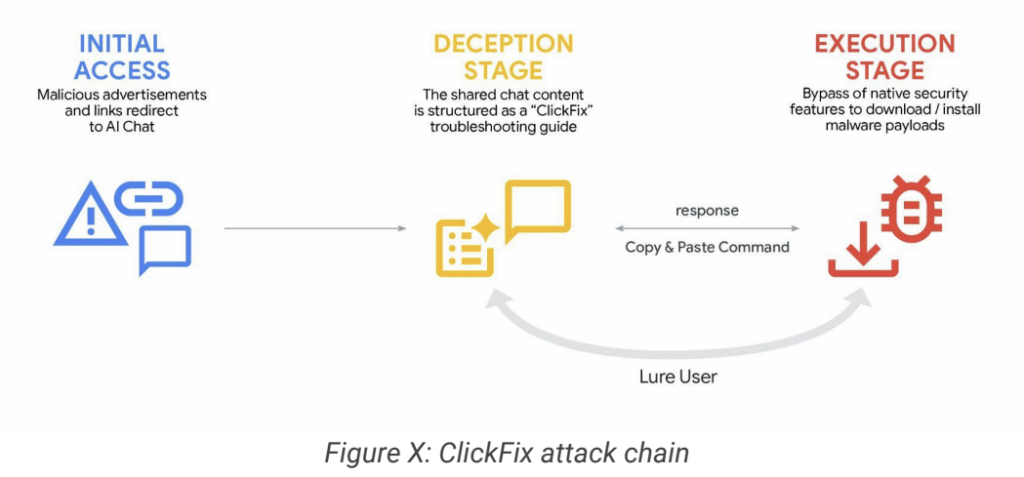

ClickFix Campaigns: Abuse of AI Chat Platforms

In a striking new social engineering tactic first recognized in December 2025, Google has seen nefarious actors exploit the capabilities of popular generative AI services. This includes platforms like Gemini and ChatGPT, which they used to share misleading content containing ATOMIC malware aimed at macOS systems.

Hackers skillfully manipulated AI-generated content to present seemingly harmless guidance for common computer tasks, layering in malicious command-line scripts disguised as solutions. By creating shareable links to these AI-generated transcripts, they cleverly utilized trusted domains as the launchpad for their initial strikes.

Thirst for Stolen API Keys: Underground Marketplaces Flourish

Reports from GTIG indicate a robust demand for AI-driven tools within underground forums in both English and Russian. However, many cybercriminals and state-sponsored hackers are limited by their inability to develop custom AI models. Instead, they often resort to mature commercial products accessed with stolen credentials.

One example is “Xanthorox,” a toolkit portrayed as an autonomous AI for generating malware and phishing campaigns. Investigation revealed it was not a proprietary model but was actually powered by existing commercial AI models, including Gemini, facilitated through stolen API keys.

Google’s Strategic Response

In light of these findings, Google has acted decisively against identified threat actors by disabling accounts and resources linked to malicious behavior. The tech giant has also enhanced its classifiers and models, ensuring they resist potential future exploitation.

“We are devoted to the bold and responsible development of AI,” the report emphasizes. “This commitment entails proactive measures to thwart malicious activities by shutting down projects and accounts associated with bad actors, while continually enhancing our models to be less vulnerable to misuse.”

GTIG reassured that despite the complex landscape, no actors have attained capabilities that significantly disrupt the security environment.

These findings are a profound reminder of the evolving role of AI in cybersecurity. As both defenders and attackers race to harness the technology’s potential, enterprise security teams—especially in regions like Asia-Pacific, where threats from states like China and North Korea remain pronounced—must ramp up their defenses against AI-enhanced social engineering tactics.

In this fast-paced cyber realm, staying educated and prepared is vital. As a community, let us collectively cultivate a safer digital landscape by sharing knowledge and proactive strategies. Together, we can face these challenges head-on.