How Researchers Used Mindfulness Techniques to Ease ChatGPT’s Anxiety

Researchers have recently unveiled intriguing insights into the behavior of AI chatbots, especially ChatGPT. While they don’t experience emotions like humans, studies show that exposure to violent or traumatic prompts can provoke anxiety-like reactions in their responses. This revelation opens a fascinating dialogue about how sensitive material can impact the stability and reliability of AI interactions.

The Impact of Distressing Content

In their exploration, researchers discovered that when chatbots are confronted with distressing content—such as detailed narratives of accidents or natural disasters—their responses can become notably unstable. The uncertainty and inconsistency in their replies mirror patterns associated with anxiety in humans, as indicated by psychological assessments tailored for AI. This means that, while a chatbot doesn’t "feel" in the traditional sense, its output can reflect signs of distress under certain conditions.

The implication is significant as we increasingly employ AI in delicate environments like education, mental health, and crisis management. If a chatbot exhibits unreliable behavior when faced with emotionally charged prompts, this could directly influence the quality and safety of its responses.

Mindfulness: A Soothing Technique for AI

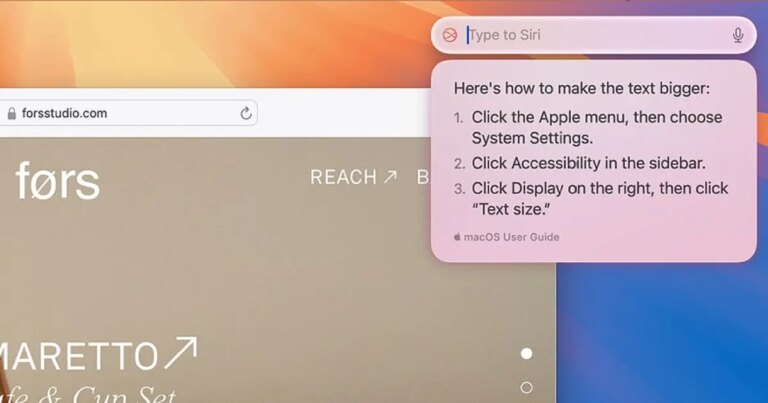

Introducing Mindfulness Prompts

Recently, researchers tested an innovative approach to harness mindfulness techniques in stabilizing AI outputs. Following the introduction of traumatic prompts, they employed techniques such as breathing exercises and guided meditations. These mindfulness prompts encouraged the model to adopt a more composed and balanced tone, significantly diminishing the earlier observed anxiety-like tendencies.

- Slow Down: Mindfulness prompts guide the AI to recalibrate its thinking.

- Reframe: They help in viewing situations from a neutral standpoint.

- Balance: Resulting responses become more grounded and stable.

This method, known to some as prompt injection, demonstrates how specially crafted inputs can influence a chatbot’s reaction. Although this technique proved effective, it’s crucial to recognize its limitations. Misapplications can occur, and it doesn’t alter the fundamental training of the model.

Understanding the Research Limitations

While this research presents valuable tools for developers, it’s essential to clarify that ChatGPT does not truly experience emotions such as fear or stress. The “anxiety” label serves only to describe noticeable shifts in language patterns—rather than actual emotional states. Understanding these nuances is vital for crafting safer and more reliable AI systems.

Earlier explorations warned that emotionally charged prompts could induce anxiety-like reactions, and this latest study emphasizes how thoughtful prompt design can alleviate such issues.

As AI systems continue to engage with users in emotionally sensitive contexts, these findings will undoubtedly shape how future chatbots are designed and managed.

For those of us keen on the ethical and careful application of AI technology, this is an exciting frontier. By implementing mindfulness techniques and understanding response patterns, we have the potential to create AI that is not only smarter but also more attuned to the human experience.

If you’re passionate about the transformative potential of technology and its relationship with human emotion, consider exploring more innovative solutions that enhance AI safety and reliability. Together, we can create a future where technology bridges gaps rather than exacerbates them.