Exposed: The Rise of Nudifying AI Apps in Apple and Google App Stores

You might want to double-check what your kids download

Navigating the digital landscape, parents often believe that platforms like the Apple App Store and Google Play Store are bastions of safety—a perception that increasingly appears misguided. A troubling analysis by the Tech Transparency Project (TTP) has unveiled alarming vulnerabilities in these so-called walled gardens, revealing that they harbor numerous AI-driven nudify apps. These applications are not hidden on the dark web; they are readily accessible, enabling users to take seemingly innocent photographs and digitally strip away clothing without consent.

Earlier this year, conversations ramped up surrounding the controversial AI Grok, created by Elon Musk, which was found generating similarly explicit images on the platform X. While Grok was thrust into the spotlight, the TTP’s investigation uncovered a much larger issue. A mere search for terms like “undress” or “nudify” in app stores yields a troubling array of software explicitly designed for creating non-consensual deepfake pornography.

The Scale of This Industry Is Frankly Staggering

This is much more than a few stray developers slipping through the cracks. According to reports, these apps have amassed over 700 million downloads and generated an estimated $117 million in revenue. Perhaps more unsettling is the fact that both Apple and Google profit from such non-consensual imagery by taking a commission on in-app purchases. When someone pays to manipulate an image of another person, the tech giants receive their share—a practice that should raise eyebrows.

Google Play Store

The human cost associated with these technologies is immense. Innocuous photos—like a casual selfie or a yearbook image—can be weaponized into explicit content used for harassment, humiliation, or even blackmail. Advocacy groups have long warned that AI nudification serves as a form of sexual violence, disproportionately targeting women and, alarmingly, minors.

So, Why Are They Still There?

Both Apple and Google maintain strict policies banning pornographic and exploitative content. Yet, enforcing these policies is akin to playing a digital game of Whac-A-Mole. While companies may ban specific apps following high-profile reports, developers often swiftly redesign and re-upload existing codes, evading detection. Their automated review systems seem ill-equipped to cope with the rapid advancements in generative AI.

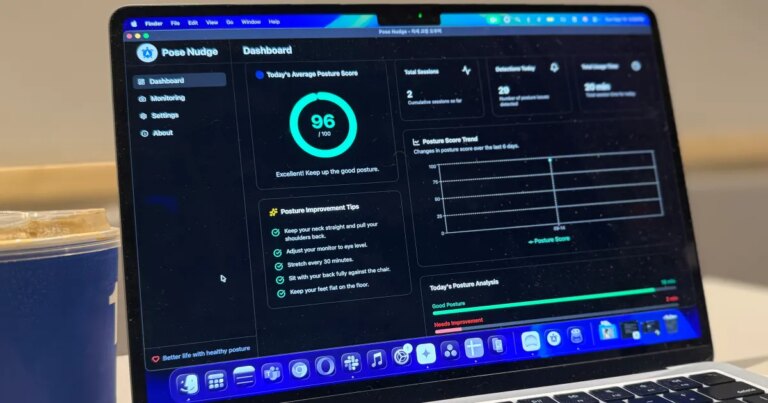

Apple App Store

For parents and everyday users alike, this is a critical wake-up call. Just because an app is listed on an “official” store doesn’t guarantee its safety or ethical standing. As AI tools continue to evolve and become more accessible, the safeguards we’ve relied upon are increasingly inadequate. Until regulatory bodies step in or Apple and Google choose to prioritize user safety over potential profits, our digital identities remain at risk.

In this rapidly changing landscape, it’s essential for parents to be proactive. Take the time to engage with your children about digital responsibility, monitor their downloads, and foster open dialogues about online ethics. Together, we can contribute to a safer digital environment for all.