Exploiting AI Chatbots: How Web Browsing Features Can Be Misused as Malware Channels

AI chatbots have transformed the way we interact with technology, yet recent findings highlight a potential downside: these tools can be manipulated by malicious actors. A recent demonstration by Check Point Research reveals a clever technique where attackers leverage AI web features as malware relays. This innovative strategy showcases how advanced technology can sometimes be weaponized, raising important questions for users who cherish both security and functionality in the sophisticated realm of beauty and wellness.

Understanding the Threat: AI as a Relay

The concept behind this demonstration is alarmingly simple. Attackers can manipulate AI web interfaces to issue commands and extract sensitive data. By directing malware to load a particular URL, the AI chatbot can summarize the information found and pass back hidden instructions.

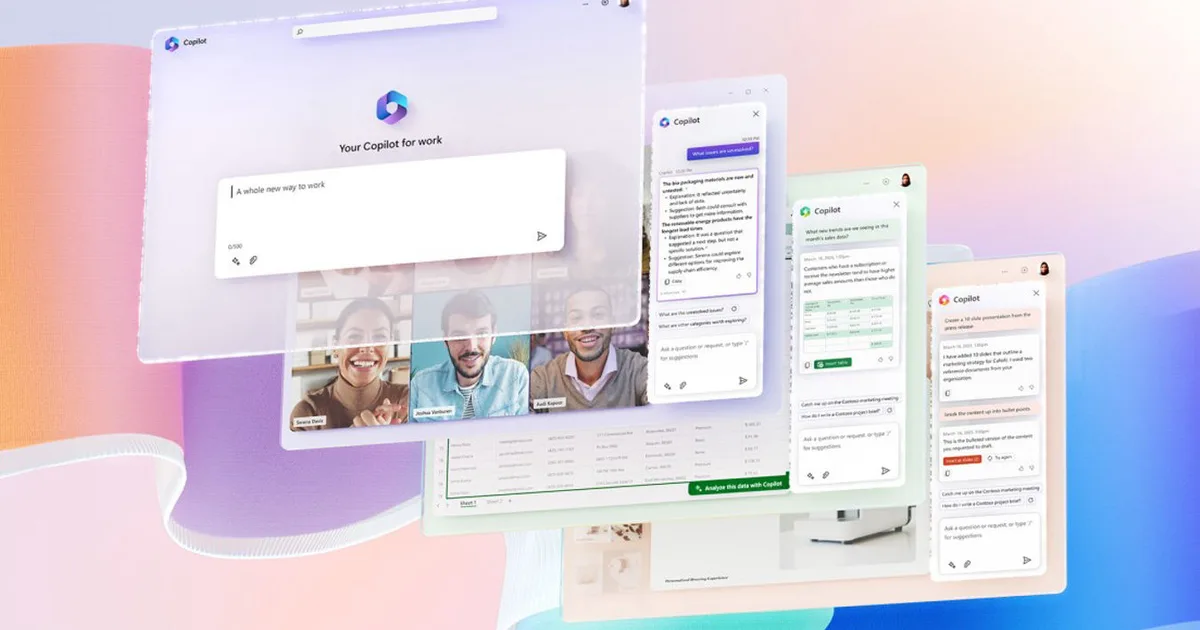

Check Point’s test of this technique on tools like Grok and Microsoft Copilot illustrates a significant vulnerability. Notably, this method operates around developer APIs, meaning that attackers can bypass many security barriers without requiring an API key. This lowers the risk of detection while it facilitates misuse.

- Data Theft Mechanism: In a reverse operation, attackers can also encode sensitive information within URL parameters, prompting the AI to unwittingly deliver it to malicious domains.

Why This Threat is Difficult to Detect

What makes this threat particularly insidious is that it’s not a new type of malware. Instead, it’s a familiar command-and-control framework misapplied within a context that many businesses are actively embracing. If browsing-enabled AI services are left open by default, an infected machine can hide behind domains that seem innocuous, easily evading detection.

Check Point emphasizes that the infrastructure used is quite typical. For instance, its example utilizes WebView2, an embedded browser component found on many Windows systems. In this setup, an application can silently gather essential host information, open a covert web view to an AI service, submit a URL request, and retrieve necessary commands—all of which could easily mimic normal app behavior.

Recommendations for Security Teams

To combat this emerging threat, organizations should treat web-enabled chatbots similarly to any other high-trust cloud application. Here are some key strategies to implement:

- Monitor for Unusual Patterns: Keep an eye out for repetitive URL requests, automation patterns, or changes in traffic volumes that deviate from typical human behavior.

- Limit Access: Consider restricting AI browsing features to managed devices and specific roles within your organization, rather than allowing access on every machine.

- Be Proactive: Watch for new automation detection features being introduced in AI tools and adjust monitoring practices accordingly to treat AI destinations as potential post-compromise communication channels.

As we navigate this complex landscape where technology and security intersect, staying informed is crucial.

Are you committed to enhancing your beauty business with transformative technology? Don’t let security concerns hold you back. Equip yourself with the knowledge to safeguard your operations today. Join the conversation and explore how to elevate your brand—securely and effectively!