Early Insights Reveal ChatGPT Health’s Fitness Evaluations May Trigger Unneeded Alarm

Experts Weigh In: Is AI Ready for Personal Health Insights?

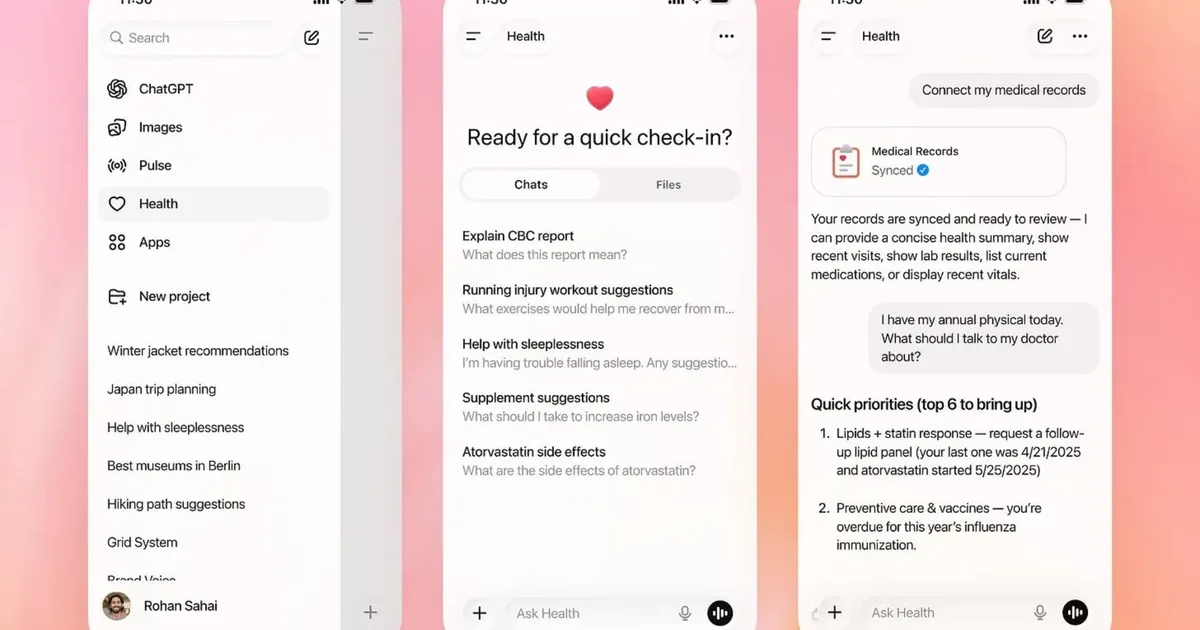

In a groundbreaking move earlier this month, OpenAI introduced a health-centric feature within ChatGPT, aiming to create a more secure space for users to delve into sensitive subjects like medical concerns and fitness trends. The launch spotlighted ChatGPT Health’s ability to sift through data from popular wellness apps such as Apple Health, MyFitnessPal, and Peloton, promising tailored insights and long-term trend analysis. However, fresh reports hint that OpenAI might have overstated the reliability of this feature.

Lack of Reliable Insights

In an intriguing assessment by Geoffrey A. Fowler of The Washington Post, ChatGPT Health was tasked with evaluating a decade’s worth of Apple Health data. The result? A shocking grade of F for cardiac health. A cardiologist, upon reviewing the findings, labeled this judgment as “baseless,” asserting that the individual’s actual heart disease risk was quite low.

A Closer Look at Reliability

Dr. Eric Topol from the Scripps Research Institute didn’t hold back his critique, emphasizing that ChatGPT Health is not yet equipped to dispense medical advice. He pointed out that the tool relies heavily on often unreliable smartwatch data. For instance, ChatGPT’s evaluation heavily leaned on Apple Watch estimates of VO2 max and heart rate variability—both notorious for their inconsistencies across different devices. Research has consistently shown that Apple Watch’s VO2 max readings can underestimate actual fitness levels. Yet, ChatGPT still treated these metrics as definitive indicators of health.

Fluctuating Evaluations

The inconsistencies didn’t end there. When the same health data was reassessed, scores fluctuated dramatically between F and B, occasionally ignoring critical details such as recent blood tests or even basic demographics like age and gender. Similarly, Anthropic’s Claude for Healthcare, which debuted around the same time, displayed inconsistent grades ranging from C to B minus for the same health data.

Both OpenAI and Anthropic emphasize that their tools serve not as substitutes for medical professionals but rather as generators of general context. However, the confident yet erratic assessments provided by these AI tools could unintentionally alarm healthy users or offer unwarranted reassurance to those with health concerns. While the potential for AI to extract valuable insights from long-term health data is undeniable, initial assessments reveal that feeding extensive fitness tracking data to these models currently leads to more confusion than clarity.

Conclusion: The Path Ahead

As we navigate the landscape of AI in healthcare, it’s crucial to approach these tools with a discerning eye. The promise of personalized health insights is compelling, yet the current limitations underscore the importance of relying on medical professionals for accurate assessments. As AI technology evolves, we can hope for a future where these tools truly enhance our understanding of health without compromising safety or efficacy.

Feel empowered to take charge of your health journey. While the road to AI-driven insights is still being paved, never hesitate to seek professional guidance. Your well-being deserves the best.