Anthropic’s Claude: Mastering Industrial-Scale AI Model Distillation for Next-Gen Solutions

Anthropic has unveiled alarming details regarding three significant campaigns aimed at extracting the capabilities of its advanced AI model, Claude. Conducted by international labs, these operations utilized over 16 million interactions through approximately 24,000 deceptive accounts. The goal? To harness proprietary algorithms and enhance their own AI platforms.

At its core, the method known as distillation involves training less sophisticated systems using the high-quality outputs generated by more powerful counterparts. While distillation can be used ethically to create more affordable solutions for consumers, unscrupulous actors exploit this technique to rapidly acquire strong capabilities without the hefty investments typically required for independent development.

Protecting Intellectual Property Like Anthropic’s Claude

The unregulated practice of distillation poses serious threats to intellectual property. Due to national security concerns, Anthropic has restricted access to Claude in China. However, culprits have devised clever ways to bypass these barriers using commercial proxy networks.

These networks implement what Anthropic refers to as “hydra cluster” architectures, dispersing traffic across APIs and third-party cloud systems. The vast infrastructure obscures single points of failure, making it challenging to tackle these threats. As Anthropic noted, “when one account is banned, a new one takes its place.”

In one notable incident, a proxy network managed to operate over 20,000 fraudulent accounts simultaneously. They cleverly mixed AI model distillation requests with standard customer inquiries to avoid detection. This raises critical concerns for corporations, forcing security experts to rethink their strategies for monitoring cloud API traffic.

Furthermore, these illegally trained models bypass established safety measures, leading to significant national security risks. Developers in the U.S. aim to safeguard their AI systems from misuse in cyber activities or bioengineering by malicious actors. Unfortunately, cloned systems can lack these protective measures, enabling dangerous capabilities to find their way into military and surveillance infrastructures of authoritarian governments.

If such distilled models are made openly accessible, the dangers multiply, as these potent abilities could escape the control of any individual government.

The Playbook for AI Model Distillation

The methods employed by these malicious actors follow a distinct operational playbook. They frequently utilized fraudulent accounts and proxy services, allowing them to access sensitive systems while concealing their actions. Their usage patterns were markedly different from legitimate requests, indicating deliberate attempts to extract valuable capabilities.

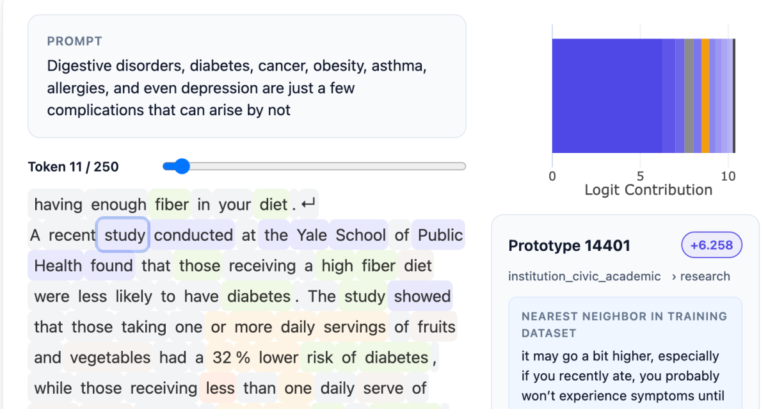

Anthropic traced the numerous campaigns targeting Claude through various indicators, including IP address correlation and request metadata. Each operation was aimed at specific functions such as agentic reasoning, tool utilization, and coding.

One campaign alone produced over 13 million exchanges focused on agentic coding and tool orchestration. Anthropic identified this effort in real-time, aligning it with public timelines of competitors. Upon the release of a new Claude model, the adversaries swiftly redirected nearly half of their traffic to extract valuable capabilities from it.

Another operation accumulated over 3.4 million requests directed toward computer vision, data analysis, and agentic reasoning. A cleverly diversified set of accounts obscured their collective efforts. Anthropic attributed this campaign by correlating request data with known profiles from competing labs. As their efforts progressed, this group sought to extract and reconstruct the reasoning patterns of the host system.

In a different instance, a third distillation effort targeted Claude with over 150,000 interactions, extracting reasoning abilities and grading methodologies. By forcing Claude to lay out its internal processes step-by-step, this group effectively generated substantial amounts of training data while also developing alternatives to safely navigate politically sensitive queries.

The requests in this particular campaign seemed innocuous on their own, often appearing as simple prompts for data analysis. However, the sheer volume of repetitive requests targeting the same capabilities revealed a pattern of extraction clearly underway.

Implementing Actionable Defenses

The protection of enterprise environments necessitates a multi-layered approach to defend against such extraction attempts. Anthropic advocates for the incorporation of behavioral fingerprinting and traffic classifiers designed to pinpoint AI model distillation patterns within API traffic.

IT leaders must also enhance verification processes for common vulnerabilities, particularly concerning educational accounts and startup initiatives. Integrating both product-level and API-level protections is vital to mitigate the chances of illicit distillation.

Monitoring for coordinated activities across numerous accounts should be a priority. This includes specifically tracking for the persistent elicitation of chain-of-thought outputs used to inform training data.

Collaboration across industries remains crucial. As attacks adapt in both intensity and sophistication, timely and coordinated intelligence sharing among AI developers, cloud providers, and policymakers will be essential.

By sharing findings related to the distillation campaigns targeting Claude, Anthropic aims to furnish a clearer understanding of the landscape. Establishing rigorous access controls for AI architectures from a technology officer’s perspective not only secures competitive edges but also ensures compliance and governance.

Feeling the pulse of the evolving AI landscape is essential. Let’s join hands in guarding innovation while fostering responsible growth. Together, we can navigate these challenges and pave the way for a secure and thriving future.