Ant Group Leverages Domestic Chips for Cost-Effective AI Model Training

Ant Group is stepping into the spotlight with an innovative approach to artificial intelligence training, utilizing Chinese-made semiconductors. This move aims to cut costs and reduce reliance on U.S. technology, as insiders reveal.

Embracing Domestic Technology

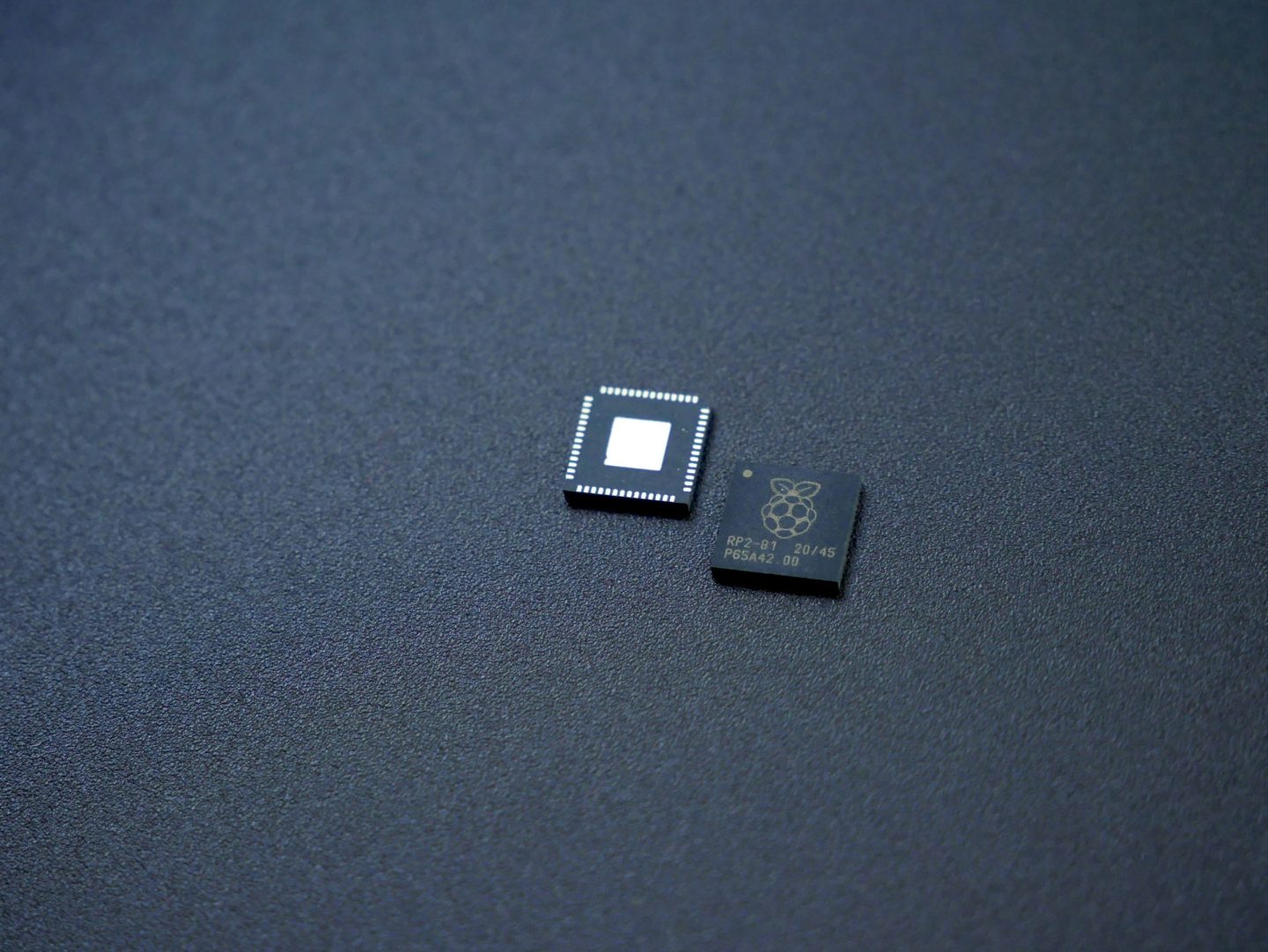

The Alibaba subsidiary has begun leveraging semiconductors from local suppliers, including Alibaba and Huawei Technologies, to train large language models using the Mixture of Experts (MoE) method. Impressively, the outcomes rival those achieved with Nvidia’s H800 chips, according to various sources. While Nvidia components still play a role in Ant’s development, there’s a notable shift toward alternatives from AMD and Chinese manufacturers for newer models.

A New Era in AI Development

This strategic pivot highlights Ant’s escalating presence in the competitive landscape of AI, especially as companies seek cost-effective solutions for model training. By experimenting with local hardware, Ant reflects a broader trend among Chinese firms aiming to bypass export restrictions that hinder access to high-end chips like Nvidia’s H800. Though not the latest technology, these GPUs remain among the most powerful available to Chinese organizations.

Ant recently published a research paper asserting that its models sometimes outperform those created by Meta. However, reports from Bloomberg have yet to verify these claims independently. If the models perform as professed, this endeavor could mark a significant advancement in reducing costs related to AI applications in China and diminishing dependency on foreign technology.

Understanding MoE and Its Advantages

The MoE method breaks tasks down into smaller datasets, allowing individual components to manage separate parts of a task. This approach has gained traction among AI researchers and data scientists. It’s akin to assembling a team of specialists, each focused on a specific part of a project, enhancing overall efficiency. While Ant has not disclosed specifics about its hardware partners, the MoE technique holds promise for optimizing training costs.

Training these models necessitates high-performance GPUs, often prohibitively expensive for smaller firms. Ant’s research aims to break down these financial barriers, as indicated by the title of their paper: "Scaling Models without premium GPUs."

Divergent Routes in AI Strategy

Ant’s approach with MoE diverges sharply from Nvidia’s strategy. CEO Jensen Huang posits that the demand for computing power will only continue to escalate, even with the advent of more efficient models like DeepSeek’s R1. His perspective emphasizes that companies will prioritize more powerful chips for revenue growth rather than searching for cost-saving alternatives.

According to the findings in Ant’s paper, training one trillion tokens—a core data unit for AI models—once cost around 6.35 million yuan (~$880,000) using traditional high-performance hardware. By applying their optimized training method with lower-specification chips, they reduced this to approximately 5.1 million yuan.

Real-World Applications of Ant’s Innovations

Ant intends to apply its newly developed models, Ling-Plus and Ling-Lite, to various industrial AI applications, including healthcare and finance. Earlier this year, the company enhanced its capabilities by acquiring Haodf.com, a Chinese online medical platform, to further its goal of deploying AI solutions in healthcare. Additionally, Ant assists users with AI-driven services such as the virtual assistant app Zhixiaobao and the financial advisory platform Maxiaocai.

In the words of Robin Yu, CTO of Beijing-based AI firm Shengshang Tech, “If you find one point of attack to beat the world’s best kung fu master, you can still say you beat them, which is why real-world application is essential.”

Pioneering Open-Source AI Models

Ant has made its models open source, with Ling-Lite boasting 16.8 billion parameters and Ling-Plus featuring 290 billion. For context, estimates indicate that the closed-source GPT-4.5 has around 1.8 trillion parameters, showcasing the significance of Ant’s advancements.

Despite these promising developments, the paper acknowledges that training models remains fraught with challenges. Minor adjustments in hardware or model architecture during the training phase can lead to unstable performance, including unexpected spikes in error rates.

Explore More in AI and Big Data

Curious about the forefront of AI and big data? Join us at the upcoming AI & Big Data Expo, taking place in Amsterdam, California, and London. This comprehensive event is co-located with other premier events, such as the Intelligent Automation Conference, BlockX, Digital Transformation Week, and Cyber Security & Cloud Expo.

Discover additional enterprise technology events and webinars powered by TechForge here.

Embrace the future of technology and stay ahead in the fast-paced world of AI and data science. Your journey into the limitless possibilities of innovation begins today!